[This fragment is available in an audio version.]

More people, more often, are finding themselves dealing with the output of Machine-Learning (ML) software. With even a little practice you can spot when it’s happening. It makes me wonder what the future of human/ML interaction looks like. Herewith a few personal experiences.

Gmail spam · One of the world’s largest software systems with an ML component? Spam used to be such a major irritant but now mostly it isn’t except when it is. Obviously, Gmail judges itself by how well it avoids errors both positive (branding legit email as spam) and negative (letting it through to get in my face). ¶

It veers back and forth as they evolve the model. Occasionally some class of spamster figures out how to fool it — I remember when it was translators, most recently it’s subject “Invoice” with a Geek-squad logo in the message body.

But the positive errors are more damaging, because I don’t wanna visit the spam folder ever really, but I feel like sometimes I have to. And when the model goes off the rails in the positive direction, it’s baffling: Posts to mailing lists where I often speak up, notes from teachers about a child’s school issues, things that I really care about.

Protip: Type “/in:spam” into Gmail.

As I write this, Gmail has been running smoothly down the right side of the track in recent weeks, which to say admitting a few spooky spams but filtering out nothing of any value. I bet it doesn’t last.

And I confess to enjoying certain rare classes of spam: Residential real-estate in Mozambique and Mongolia, used Heidelberg presses for sale, lurid love offerings in hilariously broken English.

Jaguar wipers · I mean the ones on my car’s windshield. On an extended drive in variable precipitation, they get dialed in and are amazingly great, flipping the blades back and forth at just the right speed for conditions between drizzle and downpour. ¶

But boy, do they get confused when they’re initially trying to get the picture, flailing furiously at an occasional speckle on the glass, or steadfastly refusing to move when I’m looking through considerable snowflake impact.

Protip: Hit the wiper-fluid button. Which apparently causes it to flush all its caches and begin learning from scratch; almost always yields a sensible state.

Streaming smarts · Back to Google, which is probably OK because they’ve exposed as many humans to ML-model output as any other organization on the planet. ¶

It’s taken literally years, but I have successfully battered YouTube Music’s Your Supermix stream into sanity. I signed up when Google Music kicked the bucket but offered a promise, on its dying breath, to pass along my personal library of twelve thousand or so songs.

It asked me, while getting started, to name some musicians I liked, but then literally crashed when I picked eighteen or so from its alphabetical list. Maybe the problem was they represented too many unique genres?

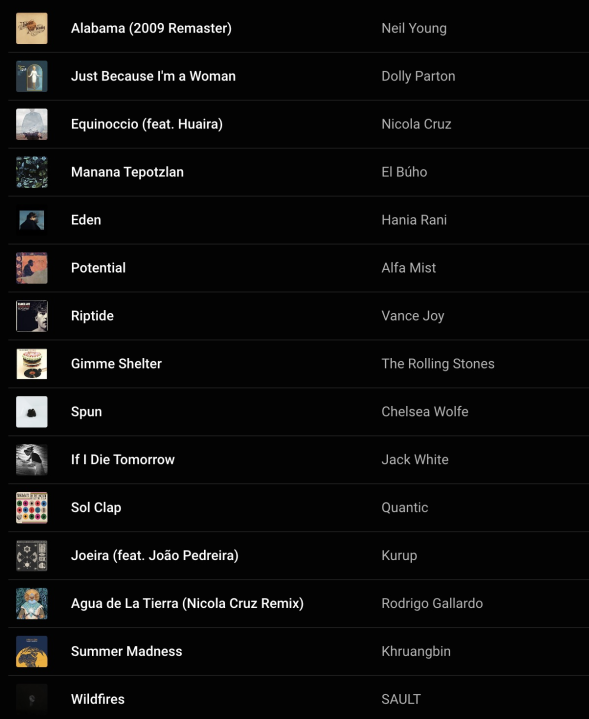

Anyhow, it eventually got the idea that I liked soft, dreamy stuff and besieged me with Bohren & der Club of Gore and Skinshape and Emancipator which, well OK, all of them, but could we rock out or hear a pop tune occasionally? Yeah, now we can. Here’s a random sampling of my personal “Supermix” on this Wednesday evening in July 2022:

A lot of these are good.

The nice thing is that on another evening it’ll bulk up on Coltrane and Muddy Waters and Brenda Lee. Also, it seems to be sensitive, in a good way, to time of day.

It’s not perfect. In particular, it’s completely fucking useless on classical music.

Protip: Be aggressive with the Thumbs Up/Down buttons.

This model is unlike any other in my experience in that adapts to input stimuli over a time-frame measured in months-to-years.

The future is full of silent struggle · The above are just anecdata. But just as thirtysomethings are the first generation to have grown up never without Internet, today’s very young will be those who will grow up never not interacting with ML models. And remember, the ML model is never on your side; its primary agenda is the agenda of whoever paid to have it built. ¶

I think people younger than I will become skilled at influencing ML models (a skill they mostly won’t even notice they have) and that this skill will increase more or less in parallel with the models’ builders’ skill in meeting the builders’ undisclosed internal business goals.

I retain some shreds of optimism that the humans will stay ahead, at least for a while.

Comment feed for ongoing:

From: Andrew Reilly (Jul 14 2022, at 17:27)

Who has the time to train these systems? I don't. Recommendation systems have never given me what I'm after. I don't want a machine to guess what I want: I want well designed affordances so that I can tell it, and it'll do it. I find Netflix' thumb buttons useless, because most movies are "meh", and there isn't a button for that.

I don't use any music systems with recommendation or prediction, but the few times I've tried the recommendations were so off-base that I didn't give the system another chance.

Humans are actually good at controlling things. We should always have a mode where we can tell the "AI" to get out of the way.

[link]

From: Rob (Jul 17 2022, at 11:15)

Well, human beings have several hundred thousand years experience in propitiating the inscrutable, whether its killing a black chicken (or a hecatomb for that matter), or carrying an onion on your belt and wearing blue beads to ward off the Evil Eye, or turning widdershins when you spill the salt, or whatever. I expect we'll be pretty good at developing countervailing strategies and incantations for dealing with our new gods.

[link]

From: Alex (Dec 06 2022, at 10:56)

Last.fm used to have a plugin interface that sprouted a lot of interesting tools. One of these compared the correlates of your listening (and consequently their recommendations) to the self-reported age and gender of the general user population. I found that trying to get a read-out 5 years younger and significantly more female than me seemed to optimize the music recommendations.

[link]