Bot Droppings

I was idly watching my server logfiles today, pretty quiet on Sunday afternoon so it was mostly just the crawlers, and observed some puzzling behavior from the Googlebot. So I ran a few reports.

I used three weeks of data, for the weeks ending early in the mornings of Sunday Nov. 14th, 21st, and 28th. This is a useful time-span, because I republished the whole site on Sun. Nov. 7th to tweak the CSS a little bit, so basically every page has a last-modified at the start of this period. The observations were mostly pretty flat across the three weeks, and I’ll drill down where they’re not.

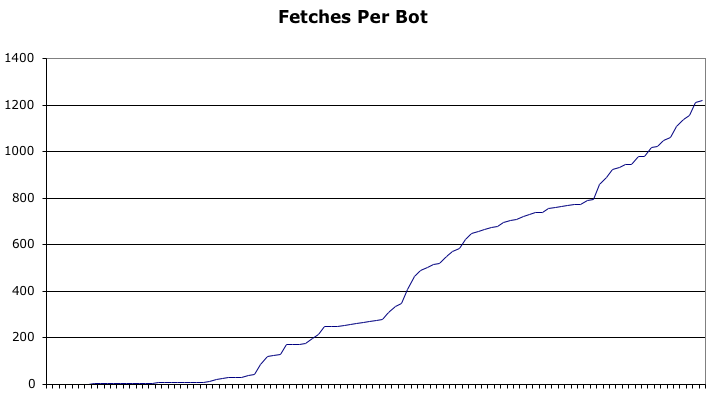

The Numbers · ongoing was visited by bots from 103 unique IP addresses. All of them didn’t come every week, there were 70 or 80 distinct addresses per week. Here’s a graph of how many pages each fetched.

The bots fetched just under 1,800 distinct URIs (just over a thousand actual pages, plus the category and date pages). There were 40,557 fetches, which is to say 23.108 fetches/page, which is to say each page got fetched slightly more than once per day.

Of those forty thousand fetches, 94.9% got status code 200 (actual page fetch) and only 5% got 304 (page not changed since last fetch, so no fetch). In the first two weeks the 200 percentage was 98% or so, and in the week just ended 89%; one hopes that it’ll trend down as the weeks pass. Because at the moment, Google is downloading about 100 megabytes/week from ongoing.

Weirdness · Here are two lines reproduced that I saw going by just now; and once I was alerted, I saw this pattern a few more times.

crawl-66-249-64-58.googlebot.com - - [28/Nov/2004:19:41:25 -0800] "GET /ongoing/When/200x/2003/12/08/Entropy HTTP/1.0" 304 - "-" "Googlebot/2.1 (+http://www.google.com/bot.html)"

crawl-66-249-64-58.googlebot.com - - [28/Nov/2004:19:41:25 -0800] "GET /ongoing/When/200x/2003/12/08/Entropy HTTP/1.0" 200 5343 "-" "Googlebot/2.1 (+http://www.google.com/bot.html)"For those who don’t read Apache logfiles for fun, bots from the same address fetched the page twice in rapid succession, the first time getting a no-change signal and the second time actually getting the page.

Conclusion · I’ll mostly hold off on conclusions, let the web geeks out there draw their own. Except to say that if I were asked to coin a brief description of this crawling strategy, it would be: Brute force.