Web Decay Graph

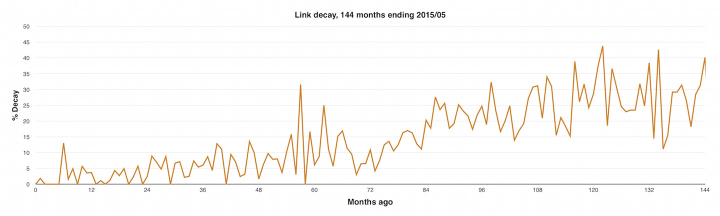

I’ve been writing this blog since 2003 and in that time have laid down, along with way over a million words, 12,373 hyperlinks. I’ve noticed that when something leads me back to an old piece, the links are broken disappointingly often. So I made a little graph of their decay over the last 144 months.

The “% Decay” value for each value of “Months Ago” is the percentage of links made in that month that have decayed. For example, just over 5% of the links I made in the month 60 months before May 2015, i.e. May 2010, have decayed.

Longer title · “A broad-brush approximation of URI decay focused on links selected for blogging by a Web geek with a camera, computed using a Ruby script cooked up in 45 minutes.” Mind you, the script took the best part of 24 hours to run, because I was too lazy to make it run a hundred or so threads in parallel.

I suppose I could regress the hell out of the data and get a prettier line but the story these numbers are telling is clear enough.

Another way to get a smoother curve would be for someone at Google to throw a Map/Reduce at a historical dataset with hundreds of billions of links.

This is a very sad graph · But to be honest I was expecting worse. I wonder if, a hundred years after I’m dead, the only ones that remain alive will begin with “en.wikipedia.org”?

Comment feed for ongoing:

From: Nitin Badjatia (May 31 2015, at 12:02)

One can imagine this getting significantly worse if one of the mega url shorteners (bit.ly) goes under.

[link]

From: Pieter Lamers (May 31 2015, at 13:24)

That's why science publishing moved to DOIs. Even then you will need some maintenance effort, but at least it is possible to move and redirect.

[link]

From: Preston L. Bannister (May 31 2015, at 18:24)

A hundred years from now, Google (or the equivalent) will auto-suggest alternate URLs, and be mostly correct. :)

Sounds like humor, but introspectingly web links, then extracting and inferring semantics, will only get better. More of a bottom-up semantic web.

The interesting bit is the apparent bump in the curve around five years. Small sample, so it might say more about Tim Bray's habits than the general web.

[link]

From: Norbert (May 31 2015, at 19:54)

How did you detect "decay"? Just based on HTTP codes, or by actually looking at the linked content? On my own blog, I found more often than I like that old links still "work" per HTTP, but now refer to something rather different from the content that I originally intended to refer to. It seems quite common that spammers take over domain names that the original owners don't want to pay for anymore, or that companies redirect links to content they don't care for anymore to stuff they want to promote today.

[link]

From: Charlie (May 31 2015, at 20:36)

Bret Victor posted some interesting thoughts on this subject a few days ago:

http://worrydream.com/TheWebOfAlexandria/

http://worrydream.com/TheWebOfAlexandria/2.html

[link]

From: Ole Eichhorn (May 31 2015, at 20:43)

Thanks for this, Tim; now I have to write a similar script and perform the analogous experiment :)

I, too, have noticed in passing that old links are often dead. Interestingly it feels like links to blogs are more likely to be okay than links to news sites, which are apparently more likely to be acquired, reorganize, die, or otherwise fail to preserve content.

In the bad old days most people hosted their blogs on their own domains, now they are much more likely to use a service like WordPress for hosting, or a service like Tumblr or Medium for posting. I wonder if that will make new content more or less likely to persist?

Cheers

[link]

From: Ramiro (Jun 01 2015, at 03:52)

Relevant article written by Tim Berners-Lee in 1998 and still reachable!

http://www.w3.org/Provider/Style/URI.html

[link]

From: Steven (Jun 01 2015, at 05:30)

The Wayback Machine will revive dead links in most cases. It's rather easy to control The Wayback Machine. If you look at the code for the Drupal text filter mentioned below, you'll find it can be done in two lines of code.

http://vertikal.dk/linkrot-solved-problem

[link]

From: Ricardo Bánffy (Jun 01 2015, at 06:21)

I have had some moderate success with archive.org. Can you retry your graph with that as a second series?

[link]

From: Paul A Houle (Jun 01 2015, at 07:19)

To the DOI example I would say the problem that it solves is that scientists are held hostage by publishers who are in turn held hostage by CMS vendors.

If you have control of your software and you care about it, it is easy to maintain URL stability. The trouble is that academic institutions don't have the discipline to control that kind of thing and they don't care.

[link]

From: Herbert (Jun 01 2015, at 11:14)

We recently published results of a large-scale study that looked into approximately 600K links extracted from over 3M scholarly papers published between 1997 and 2012. Those were links to so-called web-at-large resources, i.e. not links to other scholarly papers. The study looked at link rot but also at the availability of representative archived snapshots in web archives. Various web archives that support the Memento "Time Travel for the Web" protocol (RFC 7089) were used, not just the Internet Archive. The write-up is in PLOS ONE (http://dx.doi.org/10.1371/journal.pone.0115253) and, as is to be expected, the news isn't good.

This work was done in the context of the Hiberlink project (http://hiberlink.org), a Mellon-funded collaboration between the Los Alamos National Laboratory and the University of Edinburgh. In addition to quantifying "reference rot" - the combination of link rot and content drift (cf. the comment by Norbert) - the project looks into strategies aimed at ameliorating it. An overview of proposed approaches to combat reference rot is on the Robust Links site (http://robustlinks.mementoweb.org). Specifically, check out the Link Decoration proposal (http://robustlinks.mementoweb.org/spec/) and the demo that illustrates how Link Decorations combined with some simple JavaScript that leverages the Memento protocol and associated infrastructure allows a user to readily obtain archived content for a linked resource from one of many web archives around the world (http://robustlinks.mementoweb.org/demo/uri_references_js.html). The simplest implementation of this approach only requires a machine-actionable page publication date (http://robustlinks.mementoweb.org/spec/#page-date) and the Robust Links JavaScript (https://github.com/mementoweb/robustlinks).

[link]

From: JZ (Jun 01 2015, at 19:45)

Check out http://perma.cc. It's working to solve the problem beginning with references in scholarly work and judicial opinions. We're working to integrate it with Herbert's Memento project.

The DOI is a good start but doesn't itself archive anything.

[link]

From: Jochen Römling (Jun 02 2015, at 01:57)

This is so sad! I'm still in the mindset of longevity and want the things I create to last a while. I want to bookmark stuff to remember it even a few years down the road when I want to recommend it to somebody because the topic came up in a conversation. Apparently, the younger generation doesn't care that much anymore. They live in Snapchat and whatever they post online is forgotten after a week and never looked at again. So sad!

Our local newspaper recently changed their CMS and all links that you ever bookmarked to their articles were just destroyed over night. Of course there was no notice so you couldn't grab them before they went away, but only a "Hey, we improved our website, don't you like it?" No! I use Feedly for news reading and they have an option to save an HTML copy of articles you like to Dropbox. At least you have that.

[link]

From: Andy Jackson (Jun 02 2015, at 08:19)

We've been scanning our archived holdings of the UK web, and the rot rate is surprisingly high. After just one year, 20% of links are dead, but worse still a further 30% have changed so much as to be unrecognisable. Gory details here: http://anjackson.net/2015/04/27/what-have-we-saved-iipc-ga-2015/

[link]

From: Ted (Jun 03 2015, at 22:41)

I don't quite follow what I'm seeing, here. The graph seems to go down surprisingly steep and often. Does that mean that links formerly broken suddenly re-appeared -- en masse?! Is each point the % of links broken for that one blog post? I'd love to see the showing "of all the URIs up to this point, n% are borken".

[link]

From: Scott (Jun 04 2015, at 12:34)

I had the same thought as Ted. There's something off about this graph. So much volatility!

[link]