In my position I probably shouldn’t have a favorite AWS product, just like you shouldn’t have a favorite child. I do have a fave service but fortunately I’m not an (even partial) parent; so let’s hope that’s OK. I’m talking about Amazon Simple Queue Service, which nobody ever calls by its full name.

I’d been thinking I should write on the subject, then saw a Twitter thread from Rick Branson (trust me, don’t follow that link) which begins Queues are bad, but software developers love them. You’d think they would magically fix any overload or failure problem. But they don’t, and bring with them a bunch of their own problems. After that I couldn’t not write about queueing in general and SQS specifically.

SQS is nearly perfect · The perfect Web Service I mean: There are no capacity reservations! You can make as many queues as you want to, you can send as many messages as you want to, you can pull them off fast or slow depending how many readers you have. You can even just ignore them; there are people who’ll dump a few million messages onto a queue and almost never retrieve them, except when something goes terribly wrong and they need to recover their state. Those messages will age out and vanish after a little while (14 days is currently the max); but before they go, they’re stored carefully and are very unlikely to go missing.

Also, you can’t see hosts so you don’t have to worry about picking, configuring, or patching them. Win!

There are a bunch of technologies we couldn’t run at all without SQS, ranging from Amazon.com to modern Serverless stuff.

The API is the simplest thing imaginable: Send Messages, Receive Messages, Delete Messages. I love things that do one thing simply, quickly, and well. I can’t give away details, but there are lots of digits in the number of messages/second SQS handles on busy days. I can’t give away architectures, but the way the front-end and back-end work together to store messages quickly and reliably is drop-dead cool.

Why not entirely perfect? Well, SQS launched in 2006. Most parts of the service have been re-implemented at least once, but some moss has grown over the years. I sit next to the SQS team and know the big picture reasonably well, and I think we can make SQS cheaper and simpler to operate.

When it launched it cost 10¢ per thousand messages; now it’s 40¢ per million API calls. “Per-message” can be a bit tricky to work out because sending, receiving, and deleting makes three calls per, but then SQS helps you batch and most high-volume apps do. Anyhow, it’s absurdly cheaper than back then, and I wonder whether, in a few years, that 40¢/million number will look as high as 10¢/thousand does today.

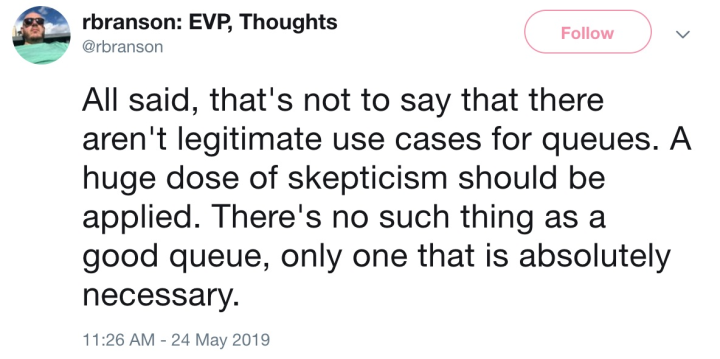

The opposition · So let’s go back to Mr Branson’s tweet-rant. He raises a bunch of objections to queues which I’ll try to summarize:

They can mask downstream failures

They don’t necessarily preserve ordering (SQS doesn’t).

When they are ordered, you probably need to shard to lots of different streams and keep track of the shard readers.

They’re hard to capacity plan; it’s easy to fill up RAM and disks.

They don’t exert back-pressure against clients that are overrunning your system.

Here’s his conclusion.

While there are good queues, I agree with his sentiment. If you can build a straightforward monolithic app and never think about all this asynchronous crap, go for it! If your system is big enough that you need to refactor into microservices for sanity’s sake, but you can get away with synchronous call chains, you definitely should.

But if you have software components that need to be hooked together, and sometimes the upstream runs faster than the downstream can handle, or you need to scale components independently to manage load, or you need to make temporary outages survivable by stashing traffic-in-transit, well… a queue becomes “absolutely necessary”.

The proportion of services I work on where queues are absolutely necessary rounds to 100%. And if you look at our customers, lots of them manage to get away without queues (good for them!) but a really huge number totally depend on them. And I don’t think that’s because the customers are stupid.

Mr Branson’s charges are accurate descriptions of queuing semantics; but what he sees as shortcomings, people who use queues see as features. Yeah, they mask errors and don’t exert back-pressure. So, suppose you have a retail website named after a river in Brazil, and you have fulfillment centers that deliver the stuff the website sells. You really want to protect the website from fulfillment-center errors and throttling. You want to know about those errors and throttling, and a well-designed messaging system should make that easy. Yeah, it can be a pain in the butt to capacity-plan a queue — ask anyone who runs their own. That’s why your local public-cloud provider offers them as a managed service. Yeah, some applications need ordering, so there are queuing services that offer it. Yeah, ordering often implies sharding, and so your ordered-queue service should provide a library to help with that.

But wait, there’s more! · More kinds of queues, I mean. AWS has six different ones. Actually, that page hasn’t been updated since we launched Managed Streaming for Kafka, so I guess we have seven now.

We actually did a Twitch video lecture series to help people sort out which of these might hit their sweet spot.

With a whole bunch of heroic work, we might be able to cram together all these services into a smaller number of packages, but I’d be astonished if that were a cost-effective piece of engineering.

So with respect, I have to disagree with Mr Branson. I’d go so far as to say that if you’re building a moderately complex piece of software that needs to integrate heterogeneous microservices and deal with variable sometimes-high request loads, then if your design doesn’t have a queuing component, quite possibly you’re Doing It Wrong.

Comment feed for ongoing:

From: JD (May 27 2019, at 10:28)

Great article. I'd also submit this "classic" from John Carmack:

https://github.com/ESWAT/john-carmack-plan-archive/blob/master/by_day/johnc_plan_19981014.txt

It was, and still is, an insight that introduced me to the proper use of queues, batch processing, and systems thinking.

[link]

From: Gordon Weakliem (May 28 2019, at 21:38)

Would you consider Kafka and Kinesis queuing? They're similar but semantically somewhat different (ordering guarantees within a partition) and generally imply higher scale. Same with SNS, it's a messaging tech that you could implement with a queue but not really semantically the same.

[link]

From: Rob Sayre (May 29 2019, at 13:23)

Martin Kleppmann's research on these problems is criminally underrated. His best basic idea is that you want to feed the database's replication log into a Kafka log, and then do all the async stuff from that. This tactic neatly addresses most of the points in those queue tweets.

If you look at the Debezium Connector for PostgreSQL, there are even instructions for Amazon RDS. :)

[link]