Cloud Interop Session

I spent Tuesday at the Cloud Interop event organized by Steve O’Grady and David Berlind. Scientists say that even a negative result is useful in advancing knowledge; I’d go further and say that a wait-and-see attitude in the heat of a hype cycle is often optimal. By those criteria, this was successful. My attendee count peaked at 51.

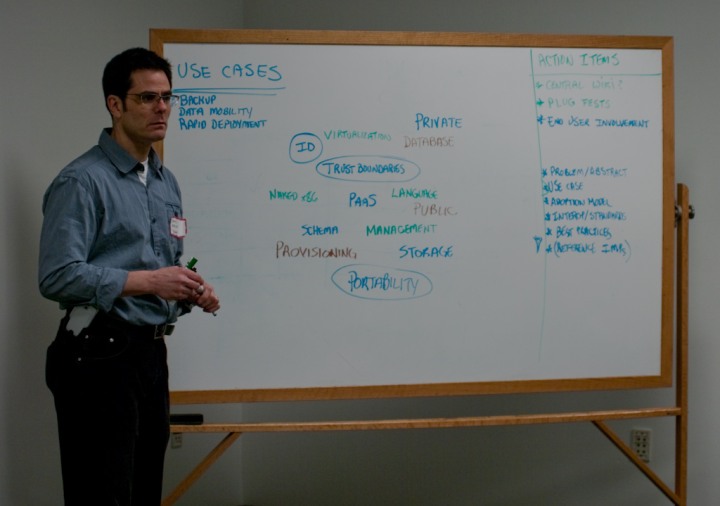

David Berlind trying to capture progress toward the end of the event.

Interop or Standards? · Here’s the problem: There is so much hype and arm-waving right now around the word “cloud” that it’s expanded to cover most aspects of IT. Thus if you want cloud interoperability, you essentially have to solve all the IT interop problems as a first step.

The conversation was labeled “interop”, but quickly made the subtle but important semantic shift to being about “standards”. With that in mind, it was appropriate that Bob Sutor, IBM’s chief standards honcho, opened up, saying sensible things about standards in general, and about the cloud, and urging people not to charge into creating another standards organization.

Bob Sutor.

It quickly became apparent, to me at least, that there were no specific problems around which formal interop or standards efforts were about to coalesce. However, there are a few parties who disagree with Bob and are working on setting up a new consortium or institute or whatever to try to own Cloud Standardization.

Take-Aways · I note that the vast majority of people who are actually using Cloud APIs in the real world are using Amazon Web Services. Amazon was notably unrepresented at the interop event; this is drearily familiar behavior by a popular incumbent in the context of interop efforts. Steve O’Grady reported Verner Vogels as saying that Amazon hasn’t figured out yet whether the AWS interfaces are intellectual property that they should protect, or not. That’s a very interesting question.

Also unrepresented were Microsoft, EMC, HP, and probably a few others.

I was fairly surprised when Anant Jhingran, who’s IBM’s CTO of something important-sounding, asserted that it’s too early for interoperability; what customers really want is “integration”. In my own conversations with potential customers I’ve encountered a visceral fear of lock-in. But Anant’s position is consistent, for example see Cloud Standards.

Drat, I didn’t capture the source of this (paraphrased quote); speak up if it was you:

Businesses are open to spending money on technology to grow. But there are very tough limits on what they’re willing to invest in existing IT deployments and partnerships. Salesforce.com succeeded by routing around IT.

Here’s another random picture to give the flavor. People were packed in pretty tightly, and willing to shut up and listen.

Sun’s Take · We’ve been unspecific recently on the Cloud, but I will say this: We want to supply systems and storage and software to people who are building cloud offerings. As such, we’d like to see a market with the minimal friction and lock-in, and a large number of successful and highly competitive players. So we’re prepared to support efforts in that direction.

Thanks · To Steven and David and the other people who helped put this on. We’ll be doing this again, I’m sure.

Comment feed for ongoing:

From: Tony Fisk (Jan 22 2009, at 01:07)

Jamais Cascio recently posted an interesting article on the problems associated with Cloud computing. He was particularly worried about *reduced* resilience:

<i>...Lots of big companies are hot for cloud computing right now, in order to sell more servers, capture more customers, or outsource more support. But there's a problem. As the company I was working with started to detail their (public) cloud computing ideas, I was struck by the degree to which cloud computing represents a technical strategy that's the very opposite of resilient, dangerously so.</i>

Source: http://www.openthefuture.com/2009/01/dark_clouds.html

[link]

From: Ross Reedstrom (Jan 22 2009, at 12:36)

I'd be interested in hearing Rich Wolski's take on the question: He's running the Eucalyptus project over at UCSD, doing an open-source reimplementation AWS. I'm sure he'd be fascinated by the comments regarding Amazon's uncertain IP stance.

http://eucalyptus.cs.ucsb.edu/

[link]

From: Rich Wolski (Jan 22 2009, at 16:10)

Well, I'll try and do my best to alleviate the potential for rumor regarding the possibility of IP conflict between Amazon AWS and Eucalyptus.

In our view, Eucalyptus respects Amazon's IP in two important ways. First, it is not a "reverse engineered" version of AWS as it is not designed to operate on the same scale that AWS is. Put another way, we designed Eucalyptus to run in a typical academic cluster environment and not across several massively provisioned, geographically distributed data centers (as is AWS). If that scale were a feature of the target setting for Eucalyptus, we would have architected it very differently. Because it is not, we did not consider or try to deduce how AWS might be engineered as it must certainly take into account contingencies at frequencies that simply do not occur at the cluster scale.

Secondly, it has always been the intention of the project to provide a vehicle for stimulating cloud usage and experimentation in general, and AWS usage and experimentation in particular.

For example, we have encountered scientists and academics who would like to use AWS, but who must justify the expense (however paltry) to their funding agencies using local resources. These research groups have application code bases in which hundreds of person-years have been invested (read: several generations of graduate students and postdocs have authored the code). Eucalyptus can be used to demonstrate that these codes will work "well" (or least, will work at all) once transitioned to AWS.

Thus we have proceeded under the assumption that we have not infringed on Amazon's IP with respect to AWS internal functionality and that we have designed a "tool" that facilitates greater AWS usage. It has never been our intention to harm Amazon's interests in doing so.

I know -- a long winded response. Please consider it an occupational hazard.

[link]